Inkbase is another Programmable Ink research project done at Ink&Switch, together with James Lindenbaum and Joshua Horowitz during summer and autumn of 2020.

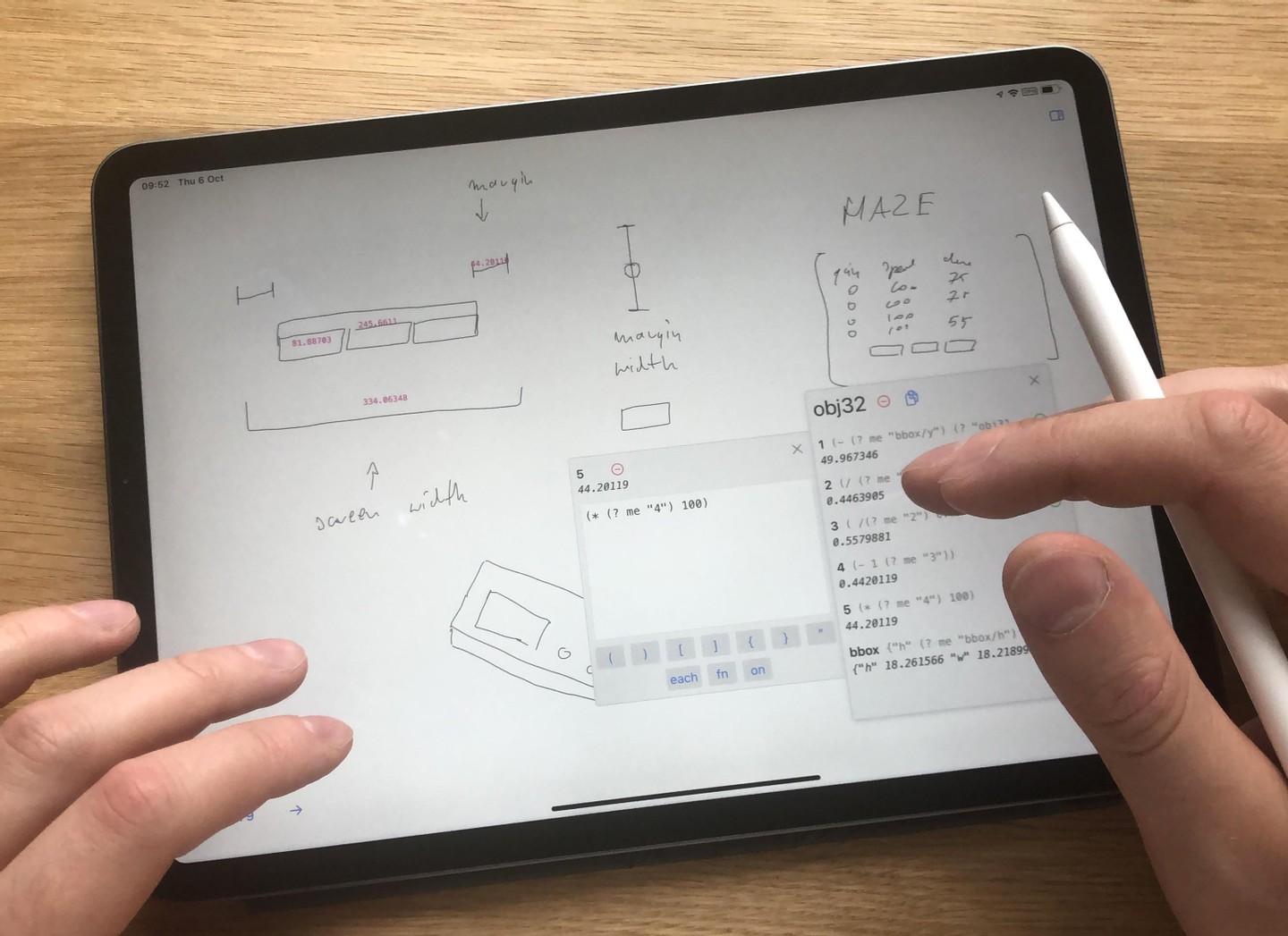

With Inkbase we explored what would it mean to have a hand-drawn ink combined with spreadsheet-like reactivity, all in end-user programmable form.

To learn more about the research, head over here for a long write-up covering everything from philosophy behind hand-drawn marks, to intricacies of the programming model.

Inkbase was presented during my 2022 Strange Loop talk, and at the 2021 LIVE conference.

Spatial messages as a way to communicate between embodied objects. The way that most programming was done in Inkbase was by sketching out some shapes on the canvas, and making them react to each other, by asking "what's to the right of me?", "is there anything inside that region?", etc. For example, in the demo below, the pink "wires" are not what makes the logic gates talk to each other — they are there just for user feedback. Instead, the logic gates query for what's to the left (inputs), and send the calculated values to everything that's to the right:

- I went on the Metamuse podcast together with James Lindenbaum, to talk mainly about Inkbase, but we also touched on some related topics, including meta-reflections on the research process itself.

- We published the Inkbase essay which surprisingly hanged around on the first page of Hacker News for a couple of days.

We hit this earlier on in Inkbase where interacting with a single item was nicely visualized with inspector panes, but groups of objects were not. We tried to explicitly solve this in Crosscut, but the solutions were far from ideal. I also hit this in my research at Glide where displaying lists of values is the main thing you do.

Crosscut is a research project developed at Ink&Switch in the Programmable Ink thread together with Marcel Goethals and Ivan Reese. It is a spiritual continuation of our work on End-User Programming (with an essay here), and the Inkbase project.