Hi,

thanks everyone for great feedback and discussions on Inkbase and Crosscut — I really enjoy hearing your perspectives on this work, and it's also helpful since chatting with you all forces me to get better at articulating my intuitions.

I spent the last three months at Ink&Switch working on applying the ideas of sketchiness and conversation with some form of other to a stylus-driven logic solver. We're only just starting to write the essay, so expect it sometime later this year. As always, if this sounds up your alley, I'm happy to give you a demo sooner than that. If you're reading this in your inbox, you can reply directly to this email, otherwise you can always reach me at hi@szymonkaliski.com.

I also managed to work on a couple of smaller side-projects: bothering Paul Shen to expose some of Natto internals, building a simple perspective-transforming document camera, and playing around with a new 3D printer.

I've been chatting with Paul about how Natto could become useful in existing contexts. One idea we played around with, is embedding Natto canvases into existing web applications, inspired by "Long-awaited live programming" talk from Joshua Horowitz. Different parts of the application could be (and sometimes already are!) made of smaller DSLs, and these DSLs, in turn, could have their own development interfaces. You shouldn't have to adopt a whole new toolchain or an experimental code editor to get some nice directly-manipulatable dev UIs.

Paul quickly put together @nattojs/eval which allows you to execute Natto flows outside of the web interface.

Below is a quick narrated demo of working with an external API in Natto, and bringing it into a simple React application:

Natto recently gained multiplayer cursors, and it's fun to imagine collaboratively live-coding parts of your application, and then including them in the main codebase as an asset file (these canvases are just JSON files that could be easily included in a git repo).

You can also imagine having a DEBUG flag which swaps parts of your application to be live-Natto-modifiable.

As you probably know, most of the work I've been doing for a while, has been tablet-oriented. The thing that we're constantly experimenting with are the touch-and-stylus interactions. To visualize what's going on, we usually add an overlay showing fingers and stylus positions, but that is just a proxy for actually seeing how the application is used.

To mitigate this, I've built a very simple DIY document camera using:

- a cutting mat with four

ArUcomarkers glued to the corners - Logitech C920 webcam

- some old lamp arm I had lying around

- a couple of 3D-printed pieces to attach the webcam to the arm

I use the ArUco markers for perspective transformation to fake the camera floating directly above the table.

The code itself is just about 300 lines of Python and OpenCV.

At first, I tried to prototype this in a browser using OpenCV.js but the performance was pretty bad — which is unsurprising, as the official build is emscripten, not wasm.

I've never really tried the unofficial wasm build, and I just defaulted to what everyone recommends: "just use Python".

Here's a short demo (and a sneak peek at the "sketchy logic solver" project I mentioned in the intro):

And since I know the exact ArUco marker sizes, one additional fun experiment was adding measuring capabilities to the tool:

Of course, this is something that Kevin already explored in detail, concluding that the accuracy is not good enough for use in a CAD context — which I agree with (forget being 1MM accurate with 1080P webcam). I'd be curious to try this with a better camera (anyone knows of any inexpensive 4K ones that are not terrible?), but ultimately good calipers probably win here anyway.

While we're on the topic of webcams, I was so happy to stumble upon uvcc which allows you to configure the Logitech-family webcams without using their clunky app.

Finally, I spent an afternoon trying to package this as an .app and failing with both py2app and pyinstaller.

In the end, I made an Automator wrapper around a CLI one-liner which works great for my usecase — starting the app directly from Spotlight search.

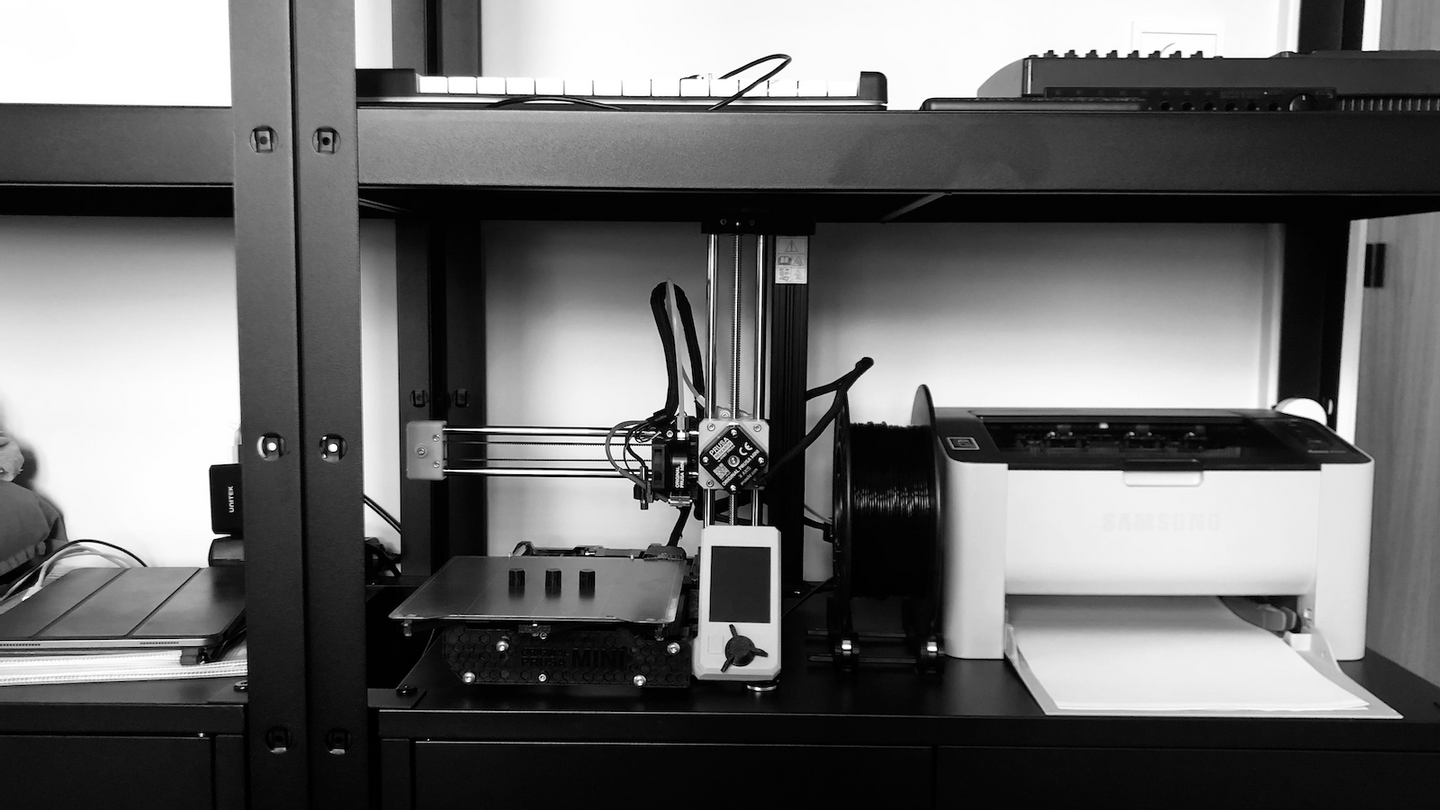

My cheap 3D printer didn't survive moving apartments — not to mention that it was incredibly fiddly, and required a lot of hand-holding to do anything. Which is ok if you want to play around with a 3D printer, but not so great if you actually want to 3D print things.

My needs for printing various little designs were piling up, and I finally had enough brain-space to pick a new one. I went with Prusa MINI+, partly because I never really print bigger things, and partly because it fits perfectly between my shelves:

I was half-expecting Prusa to be only marginally better than my old printer, but I was pleasantly surprised — after a couple of hours of assembly the only adjusting I had to do was making sure the Z-axis is correctly distanced from the heatbed. After that I don't have to do anything other than hit "print" and wait, which is awesome.

I'm writing this from a middle of a vacation road-trip from San Francisco to Galiano Island, where I'll spend a week at Gradient Retreat, most probably tinkering with various things.

Once I'm back, I'm going to spend most of my summer as a "Researcher in Residence" at Glide, prototyping a programming environment for building custom UI components that fit with the Glide's aesthetics.

Just a quick reminder — I do consulting, specializing in digital tools and no-/low-/feature-of- computing research, design & development.

Reach out if that sounds interesting.

I'm going back to the US in late September, this time to give a Programmable Ink talk at Strange Loop (which I'm both excited, and nervous about; not sure in which order).

Hope to see some of you there!

Books I enjoyed recently:

-

computers, design, Cybernetic conversation with the material:

- Design Cybernetics

- The Reflective Practitioner

- The Sciences of the Artificial

- Where the Action Is — some tangible computing, a bit of phenomenology and ethnomethodology, what else do you need?

- Metaphors We Live By and Women, Fire, and Dangerous Things for the impact of language on thinking

- Peak on Deliberate Practice

- Coders at Work — especially interviews with Dan Ingalls, and late Joe Armstrong

- Toward a Theory of Instruction

- Art and Computation — I really enjoyed the argument that computational art has to deal with, and "show", computation — and for that it has to not be functional, not ready-to-hand because then it disappears

On the web:

- if you're not fed up with hearing about Crosscut yet, Ivan made a podcast episode discussing how it differs from Hest

- WFC guy strikes again

- "How Authors Enhance the Readability of Formulas using Novel Visual Design Practices"

- this is how websites should look like

- drawing lambda calculus

- non-keyboard programming at Dynamicland

- and while we're on the topic of logic solvers, Kevin worked on a non-"sketchy" one

Thanks for reading — as always ping me about anything that seems interesting, and in the meantime: have a great summer!

Subscribe to my newsletter to receive quarterly updates.